Bibliocards

A collector utility that became a real product, then forced a rethink of its infrastructure, economics, and platform architecture.

Project Signals

Overview

Bibliocards started as a niche utility for K-pop photocard collectors. It later became a real product with recurring usage, real infrastructure costs, and technical decisions that could no longer be treated as simple side-project choices.

What makes the project important in this portfolio is not only that I built it alone. It is that I had to keep it viable as usage grew, margins stayed tight, and the original operating model stopped making sense.

From niche utility to real product

Collectors needed a cleaner way to browse, track, and reference K-pop photocards across a fragmented ecosystem of spreadsheets, fan-made lists, and inconsistent image sources.

In the beginning, that was enough. The app was useful, but still small enough to behave like a side project.

That changed in April 2023.

At that point, Bibliocards was serving around 600 daily users. Then traffic jumped to more than 6,000 daily users, with a large share of that audience coming from East Asia. That was also the moment when my quiet side project started behaving like something I actually had to operate.

Once people actually start using a product every day, new kinds of problems appear: scaling issues, infrastructure costs, and operational responsibilities. Bibliocards had reached that point.

The first operating model

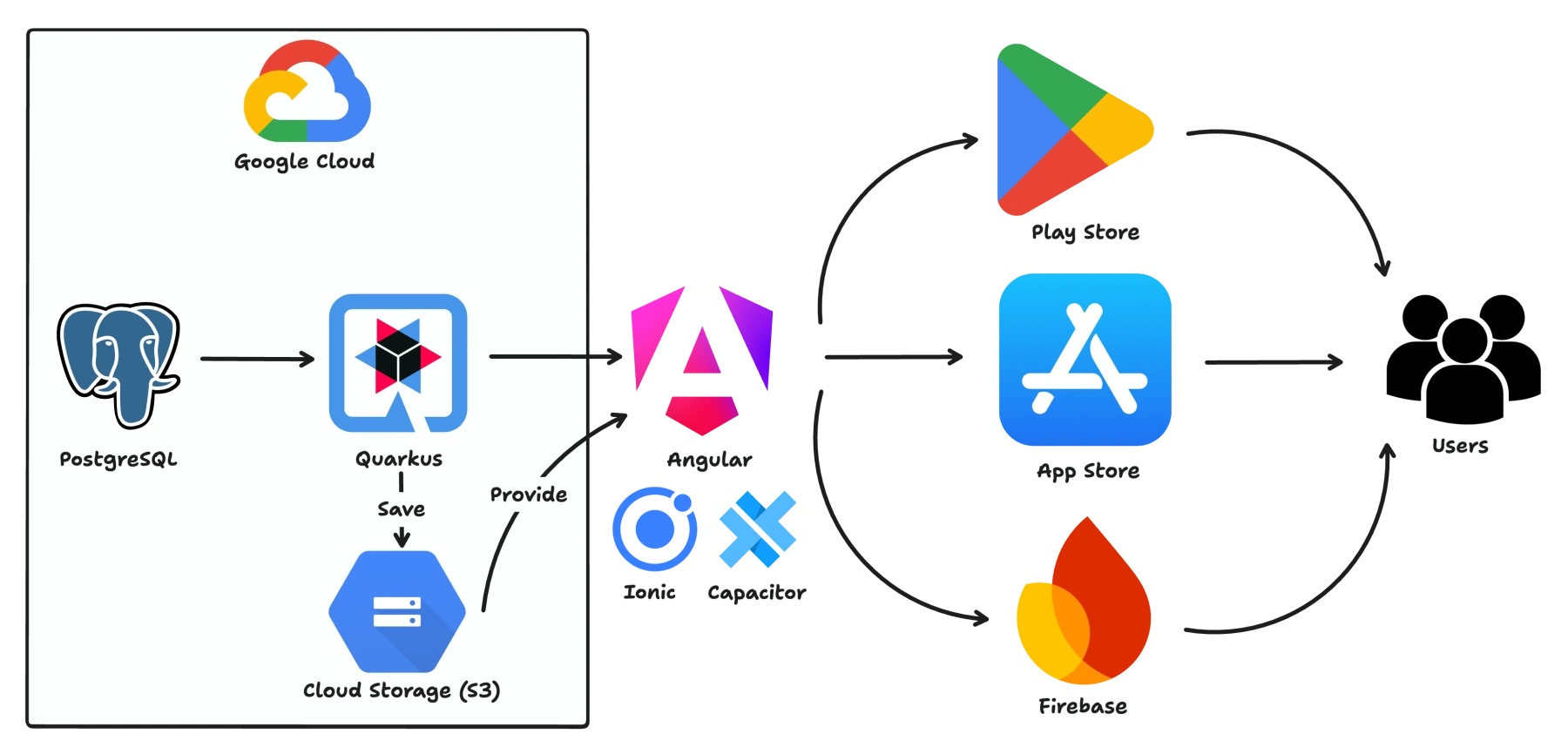

The original platform ran on Google Cloud Program:

- Cloud Run for the backend

- Cloud SQL for the database

- Firebase Hosting for the web frontend

- Cloud Storage for image storage

That setup made sense at first. It was fast to launch, convenient to maintain, and good enough for an early product that still needed validation.

At around 600 daily users, it remained manageable. The problem was that this architecture was optimized for early convenience, not for a sudden increase in traffic under tight revenue constraints.

This diagram shows the original operating model that held up while the product was still small.

The break point

When usage increased sharply, the managed cloud setup reacted exactly the way it was designed to react: it scaled.

But it did not scale in a way that matched the economics of the product.

Cloud Run started spreading load across more instances, which increased costs and amplified cold-start behavior. Cloud SQL was running on a small instance with limited headroom, and the connection pool became a bottleneck under pressure.

From a technical point of view, everything was still “working”.

From a product perspective, things were starting to look much less healthy.

Technically, the platform was still running. Economically, it was breaking.

At that stage, GCP costs were around 50 to 60 EUR per month while Bibliocards was generating less than 20 EUR per month in revenue. The issue was no longer just backend performance. The issue was that the operating model had become structurally unhealthy.

The hard tradeoff

For a period, I removed the app from some stores in order to reduce traffic and regain control over the platform.

That was an uncomfortable decision, but it was also a useful one. It forced me to treat Bibliocards less like a lightweight side project and more like a product with margins, constraints, and operational limits.

I relaunched the app on stores in September 2023. Growth did not immediately return at the same level, but it came back gradually over the months that followed.

That phase changed the project. From then on, Bibliocards was not just something to build. It was something to operate responsibly.

The infrastructure reset

In June 2024, I made what became one of the best infrastructure decisions on the project: I moved away from the original GCP setup and onto a Virtual Private Server.

It was a move I had delayed for a long time. Managed cloud had felt safer and more familiar. In practice, the VPS turned out to be both simpler and dramatically cheaper.

The result was immediate:

- Infrastructure cost dropped from ~55 EUR/month to 7 EUR/month

- The VPS handled the workload comfortably and remained largely underutilized, leaving substantial headroom for future growth

- The platform regained a much healthier margin profile

This was not just a hosting change. It was a reset of the operating model.

If I decide to document that migration in more depth, it will likely live as a separate engineering article focused on cost structure, operational tradeoffs, and why leaving managed cloud was the right decision for this product.

Why V2 exists

Once Bibliocards had proven demand, the next challenge was no longer only about reducing cost.

The product experience, the internal workflows, and the backend boundaries all needed to catch up with what the platform had become.

The mobile app still carried the feel of an older Android-era product. Internal moderation and administration workflows needed a dedicated operating surface. The backend needed clearer boundaries. And parts of the business logic needed to become both simpler and more robust.

V2 started from that reality: the product had outgrown its first shape.

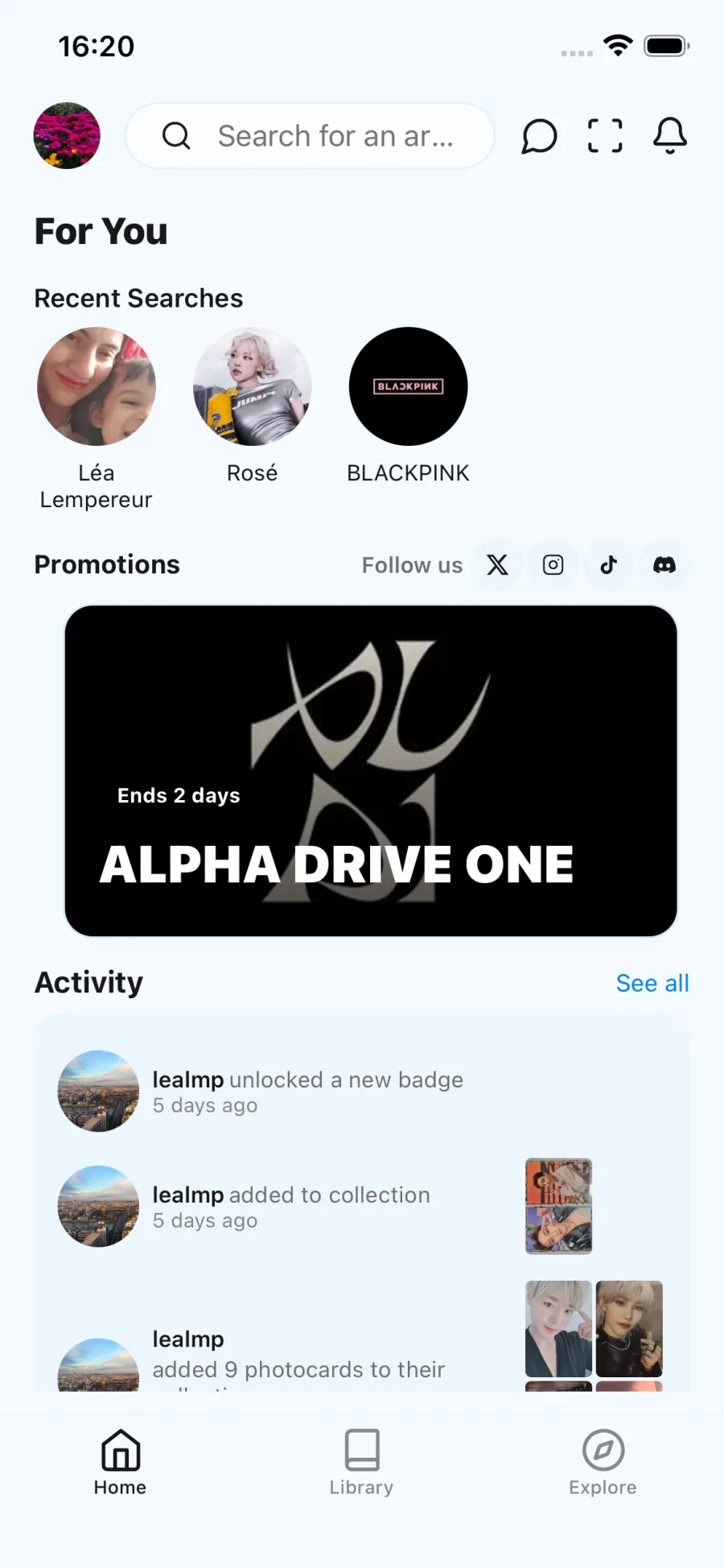

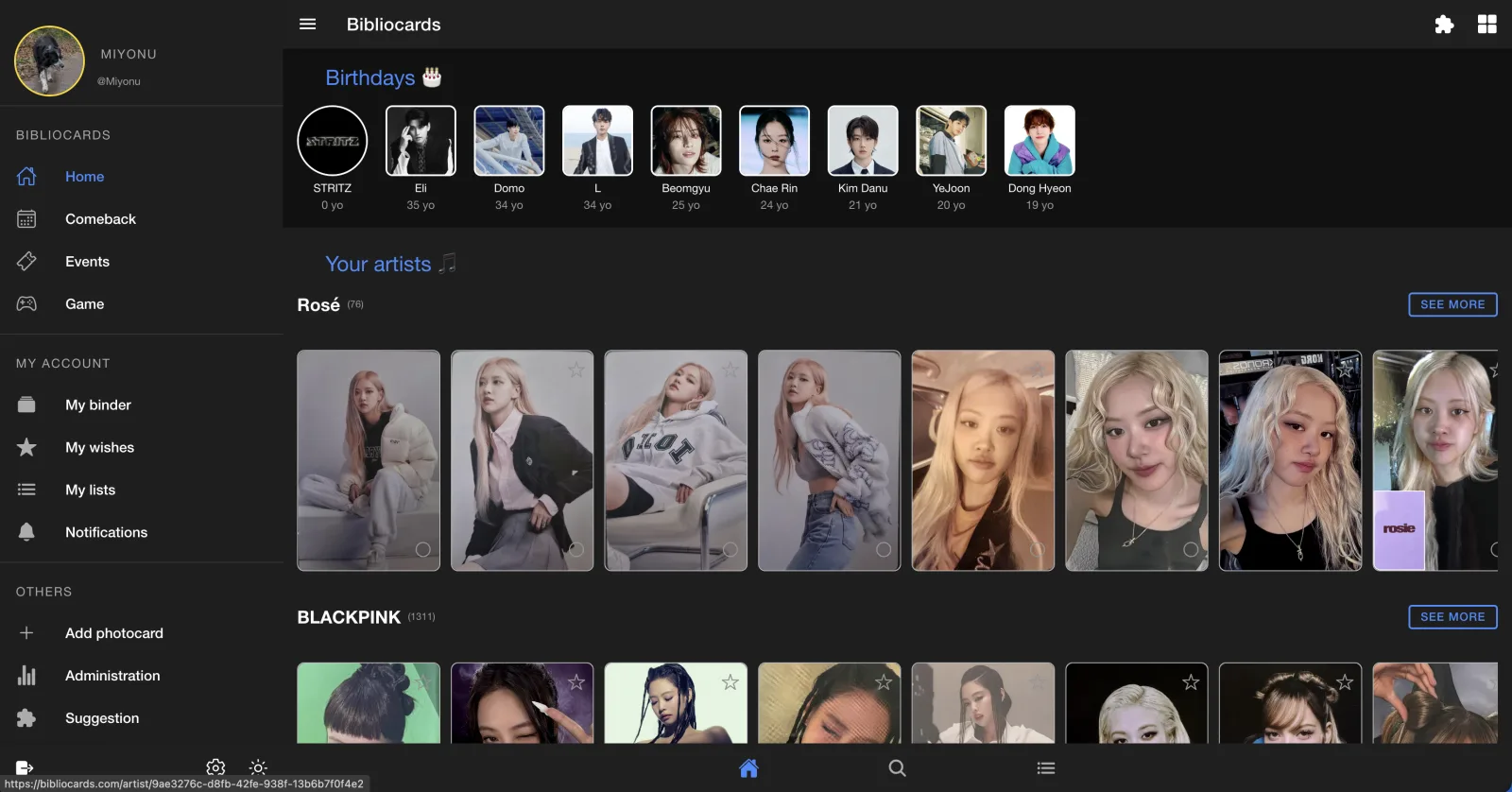

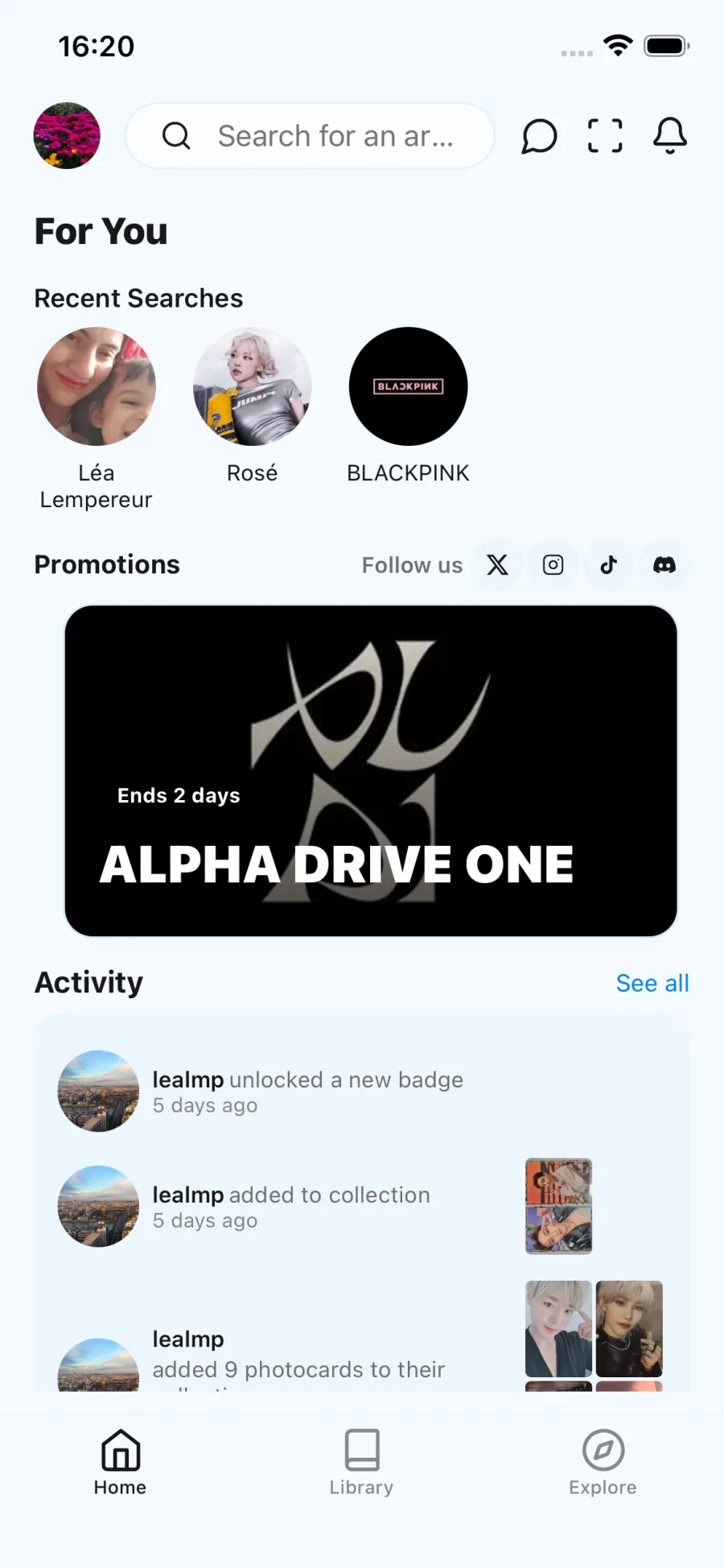

Why the interface had to change

One part of the rewrite is simply about product quality.

The first app did its job, but it no longer matched the level of polish I wanted for a product that had already proven usage. V2 is not a cosmetic refresh. It is an attempt to make the product feel modern, coherent, and pleasant to use.

This comparison matters because the rewrite changed the product surface as much as the internals.

The new mobile app is being rebuilt with React Native and Tamagui, which gives me a more consistent UI system and a much better foundation for a polished experience.

That visual redesign matters because product maturity is not only about backend reliability. It is also about whether the user experience feels current, clear, and worth returning to.

Rebuilding the platform

The rewrite is not limited to the mobile app.

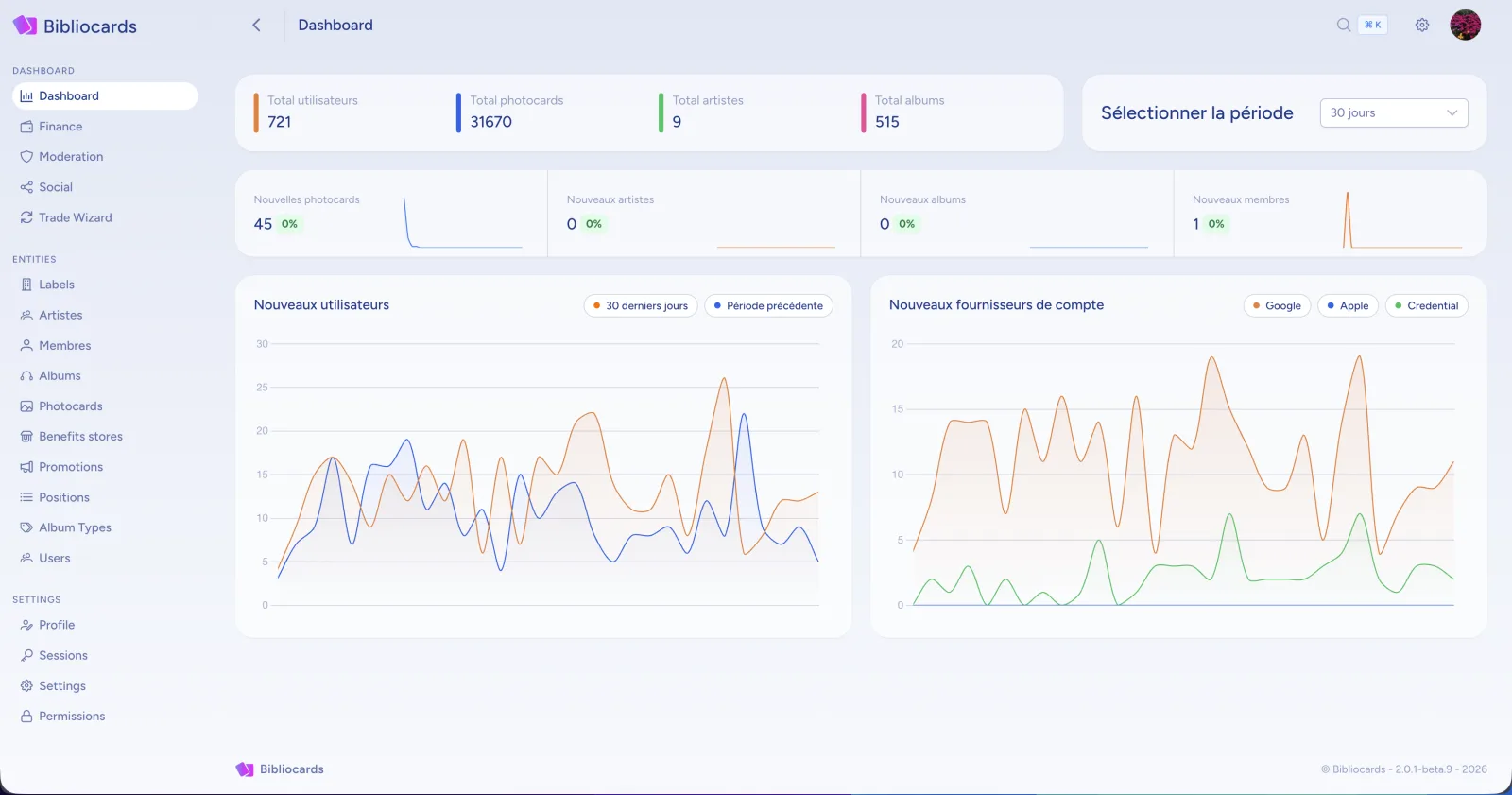

For internal operations, I built a dedicated admin office in Angular 21 for moderators and administrators. That creates a clearer separation between the public product surface and the internal operating surface.

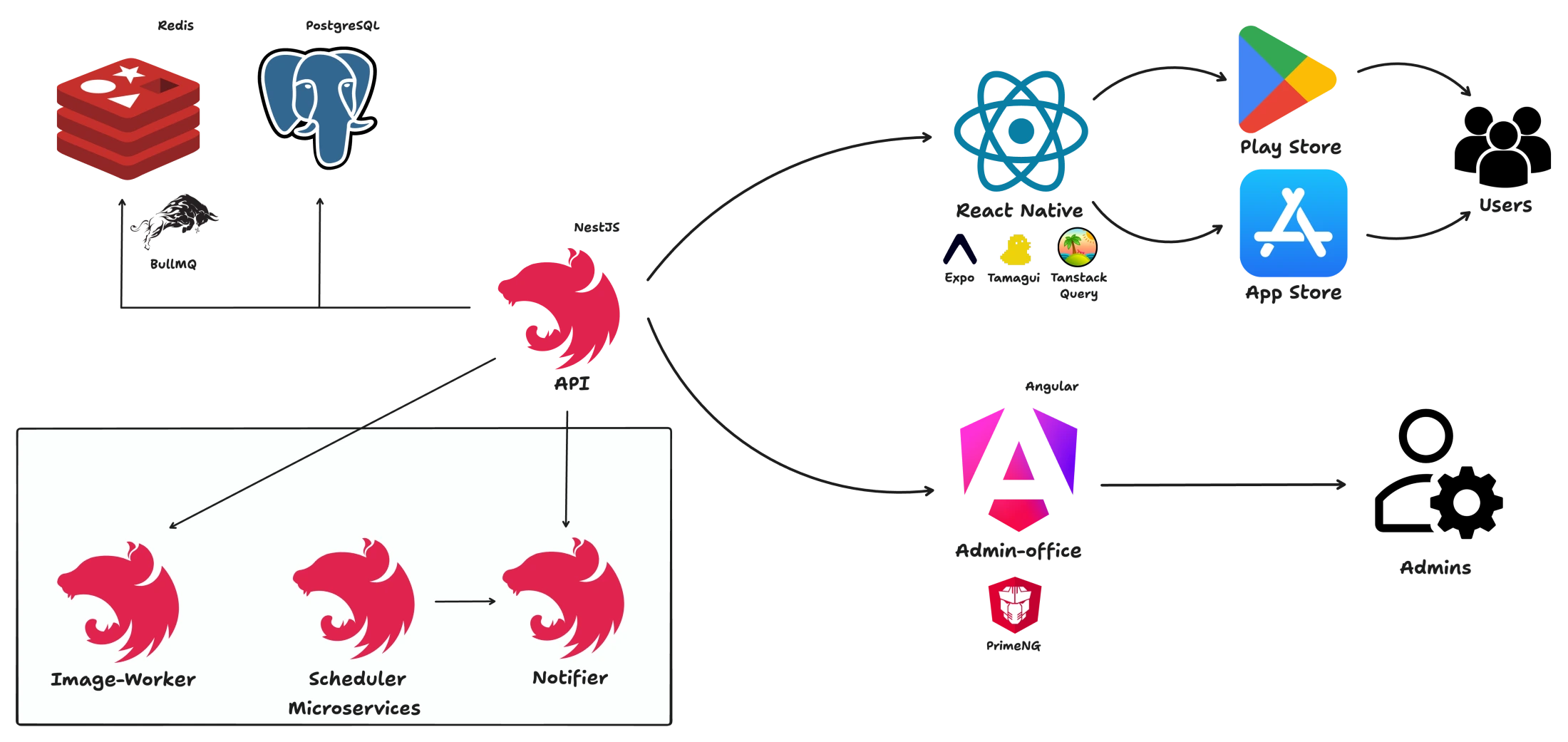

The backend changed significantly as well. The first version was built around a Quarkus monolith. In V2, I moved toward a service-oriented backend made of four NestJS applications:

- API — the main public surface

- Scheduler — cron-driven workflows

- Notifier — push notifications

- Image Worker — image processing and scan-related tasks

These services communicate through Redis and BullMQ. That choice has been one of the most decisive technical improvements in the current version of the platform, because it gave me a simple and reliable backbone for asynchronous work across service boundaries.

The rewrite also gave me the opportunity to revisit the business layer itself. The goal was not to make the system more abstract. It was to simplify the model where possible while making it more robust and easier to reason about.

The V2 architecture became easier to operate because the boundaries became explicit.

Technical proof: image workflows and matching

One of the more interesting backend capabilities in Bibliocards is the image pipeline around scanning and matching.

Collectors can usually recognize a photocard instantly. Computers need a bit more help.

That part of the system is a good example of why the V2 architecture matters. It is not only about cleaner diagrams. It is about supporting real workflows with explicit boundaries between ingestion, processing, matching, and delivery.

When scan jobs are created, they are processed asynchronously through the worker layer. The system fetches the source image, computes a perceptual hash, compares it against stored hashes, ranks likely matches, and returns a confidence-based result.

const userHash = await this.hashService.computeHash(imageBuffer)

const matches = await this.hashService.findMatches(userHash)

const result: ScanResult = {

jobId,

status: 'completed',

matches: matches.map((m) => ({

photocardId: m.id,

distance: m.distance,

confidence: m.confidence,

})),

}The matching logic itself is built around perceptual hashing, Hamming distance, and confidence scoring:

export function findHashMatches(

targetHash: string,

hashCollection: Array<{ id: string; hash: string }>,

maxDistance: number = HASH_DISTANCE_THRESHOLDS.MAX,

limit: number = 10,

): HashMatch[] {

const rankedMatches = hashCollection

.map((item) => ({

id: item.id,

distance: hammingDistance(targetHash, item.hash),

confidence: 0,

}))

.sort((a, b) => a.distance - b.distance)

return rankedMatches.slice(0, limit).map((m) => ({

...m,

confidence: distanceToConfidence(m.distance),

}))

}That is the kind of feature where product needs and system design meet directly. It requires image processing, queue-driven execution, backend coordination, and a result model that is good enough to support a user-facing experience.

What I own

- Product direction

- Mobile delivery

- Backend services

- Database design

- Infrastructure and deployment

- Media storage strategy

- Background jobs and automation

- Release workflow

- Operating tradeoffs between reliability, cost, and product quality

That ownership model matters because the strongest decisions on the project were rarely isolated to one layer. Product, infra, backend, and operations regularly affected each other.

What this project proves

Bibliocards is strong portfolio evidence because it is not a demo and not a purely technical exercise.

It is a real product with users, monetization, operational pressure, and architectural consequences.

It proves that I can:

- Turn a narrow utility into a product people actually use

- Keep a platform viable under cost pressure

- Redesign infrastructure when the original setup stops making economic sense

- Improve product quality while restructuring the backend

- Move from a monolithic starting point toward clearer service boundaries

- Make technical decisions that serve both reliability and margin discipline